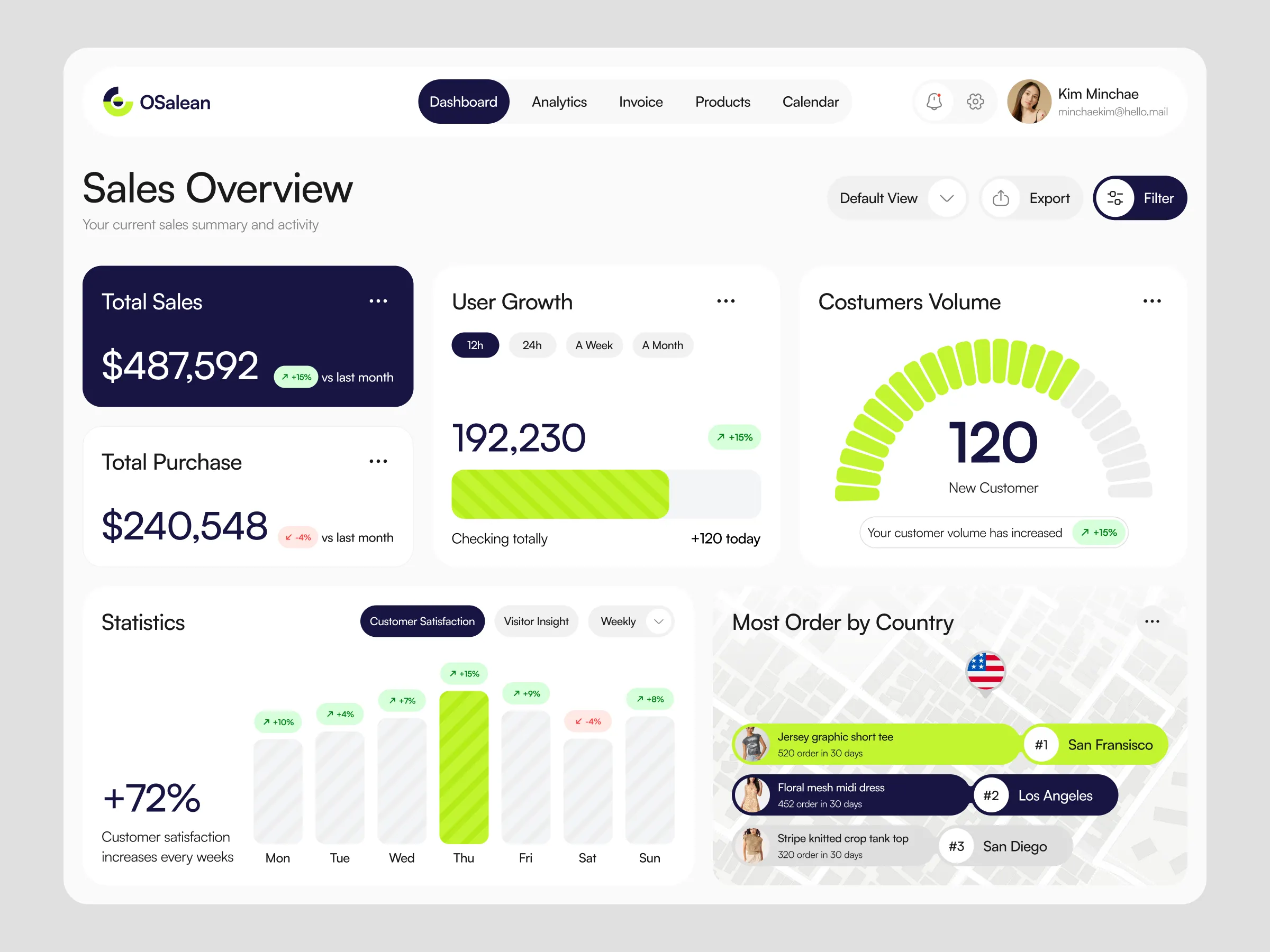

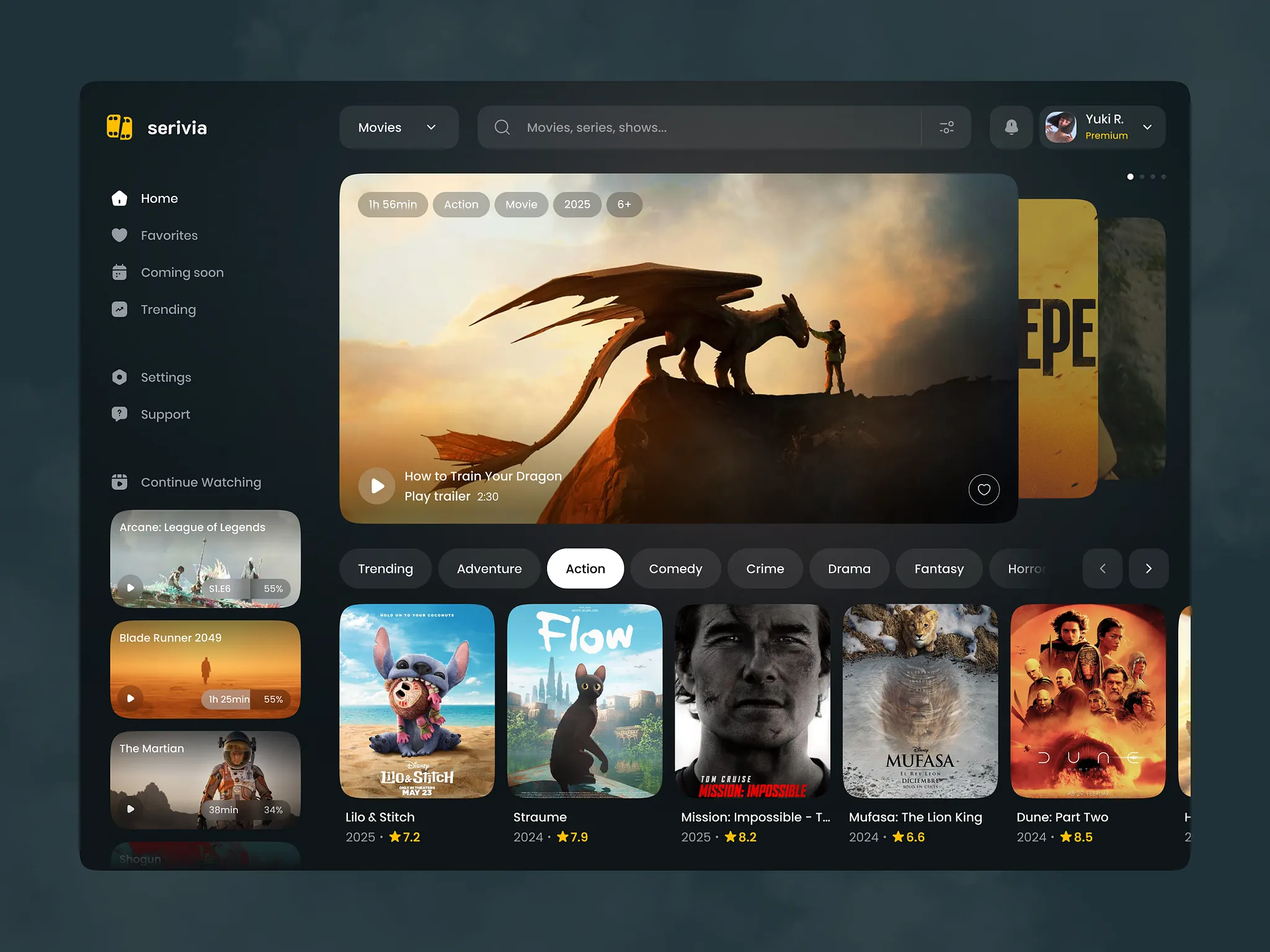

Subscription Streaming Platform

Architected a scalable mobile streaming system with secure content delivery, JWT authentication, and microservices deployed on AWS.

Year

2023

Category

SaaS / AWS

Stack

NestJS, Flutter, PostgreSQL

Key Results

8K

Subscribers

in first quarter

99.97%

Uptime

over launch period

0

Critical Bugs

in first 30 days

10 wks

Time to Launch

from kickoff

01

The Challenge

A media startup needed to launch a subscription streaming product for mobile audiences in Southeast Asia on a tight timeline. They had the content library but no platform, no billing system, and no mobile app.

02

What We Built

A NestJS microservices backend on AWS with CloudFront for CDN delivery and S3 for content storage. Stripe powers subscription billing with three tiers. JWT with refresh token rotation handles secure sessions. The Flutter app supports offline downloads for low-connectivity environments.

03

Results

Launched on schedule with 0 critical bugs in the first 30 days. The platform scaled to 8,000 subscribers in Q1 with 99.97% uptime. Stripe dunning recovered 18% of failed payments. The client expanded to two additional markets within six months.

04

Before & After

Before

Content existed only as raw files on a shared drive with no delivery infrastructure

No billing system — the team manually invoiced a handful of early users

No mobile app — users had no way to access content on their phones

Zero visibility into usage — no analytics on what content was being watched

After

Every title delivered globally via CloudFront CDN with adaptive bitrate streaming

Three-tier Stripe subscription with automatic proration, trials, and dunning management

Flutter app on iOS and Android with offline downloads for low-connectivity regions

Real-time watch events streamed to a dashboard showing performance per title

05

How We Built It

Ten weeks from kickoff to App Store. Infrastructure and app development ran in parallel after agreeing on the API contract in week one.

API contract & infrastructure setup

Defined the full REST and gRPC API contracts in week one — REST for Flutter-to-NestJS, gRPC for all service-to-service calls. Provisioned AWS ECS, S3, CloudFront, RDS, and the RabbitMQ cluster so both teams could work independently against mocked endpoints.

gRPC service mesh & NestJS microservices

Wrote .proto files defining all inter-service contracts. Built auth, content, and billing services as separate NestJS modules communicating via gRPC. This eliminated the chattiness of REST between services and caught schema mismatches at compile time.

RabbitMQ async media pipeline

Designed exchanges and queues for all async operations: download_requested, download_completed, sync_device, subscription_changed, and watch_progress. Built the download service as a dedicated NestJS RabbitMQ consumer with retry logic and dead-letter queues for failed jobs.

Flutter app — streaming, downloads & cross-device sync

Built the Flutter app with video_player for streaming, background_downloader for offline content, and a WebSocket subscription to RabbitMQ-driven events for real-time download progress and cross-device state sync.

Load testing & App Store submission

Ran k6 load tests simulating 10k concurrent users and chaos tests against RabbitMQ with simulated broker failures. Tuned CloudFront cache policies and ECS autoscaling. Submitted to App Store and Play Store in week nine.

06

System Architecture

Three independent NestJS services behind an API Gateway. Each owns its own DB schema and scales independently. CloudFront sits in front of S3 for all media delivery — the app never touches S3 directly.

API Gateway

AWS API Gateway

Single entry point for all Flutter client requests. Routes to auth, content, or billing service. Handles rate limiting before traffic hits NestJS.

gRPC Layer

gRPC + Protocol Buffers

All service-to-service communication uses gRPC — auth to content for subscription validation, content to download service for media requests. Strongly typed contracts via .proto files mean no runtime schema mismatches between services.

Auth Service

NestJS + JWT + Redis

Issues short-lived access tokens (15 min) and long-lived refresh tokens in Redis. Exposes gRPC endpoints consumed by content and billing services for token validation without HTTP round-trips.

Content Service

NestJS + S3 + CloudFront

Manages the content catalogue, generates signed CloudFront URLs for streaming, and publishes download_requested events to RabbitMQ when a user queues offline content.

Message Broker

RabbitMQ

All async media operations flow through RabbitMQ — download jobs, cross-device sync events, watch progress propagation, and playback state changes. Each operation type has its own exchange and queue with dead-letter handling for failed jobs.

Download Service

NestJS + RabbitMQ consumer

Dedicated NestJS worker that consumes download_requested messages from RabbitMQ. Fetches HLS segments from S3, packages them for offline use, and publishes download_completed events back so all user devices sync state in real time — exactly like Spotify's offline sync model.

Billing Service

NestJS + Stripe

Handles Stripe webhook events and updates access tier in PostgreSQL. Publishes subscription_changed events to RabbitMQ so the content and download services update tier enforcement without polling.

Media Storage

AWS S3 + CloudFront

HLS-encoded video files in S3, served via CloudFront with signed cookies. Multi-region edge caching gives Southeast Asian users sub-100ms time-to-first-byte.

Mobile App

Flutter + Riverpod

Single codebase for iOS and Android. Subscribes to RabbitMQ-driven push events via WebSocket for real-time download progress and cross-device playback state sync.

07

Tech Stack

Backend

Mobile

Infrastructure

Database

Payments

08

How We Approached the Problem

The biggest architectural decision was how services talk to each other. With five independent NestJS services, we needed a communication model that was fast, type-safe, and wouldn't collapse under load. gRPC for synchronous calls and RabbitMQ for all async media operations gave us the Netflix/Spotify-style architecture the client wanted — services are completely decoupled, failures in one don't cascade, and cross-device sync just works.

Alternatives considered & rejected

Single monolithic NestJS app

Content delivery and billing have very different scaling profiles. Separating them with gRPC service-to-service calls meant we could scale the content and download services independently during peak hours.

Kafka instead of RabbitMQ

Kafka is the right choice for high-throughput event streaming at Spotify scale. At our volume, RabbitMQ's per-queue routing, dead-letter handling, and simpler ops story were a better fit. We can migrate to Kafka if the client hits 100k+ concurrent users.

REST for inter-service communication

With 5 services calling each other, REST would have meant HTTP overhead on every internal call and no compile-time contract enforcement. gRPC with .proto files gave us typed contracts, bidirectional streaming for media ops, and ~7x faster serialization than JSON.

React Native instead of Flutter

The client had an existing Flutter codebase for a separate product. Reusing the same framework and Dart developers was faster than switching ecosystems.

09

Data Modelling

Each NestJS service owns its schema. Auth owns users and sessions. Content owns titles, episodes, and watch history. Billing owns subscriptions and invoices. Cross-service queries go through API calls — never direct DB access.

10

API Layer

NestJS modules map to services. The content module validates the user's subscription tier before generating a signed CloudFront URL — the Flutter client never receives raw S3 paths.

11

Database Functions

Two PostgreSQL functions handle the most frequent read patterns: continue-watching list on app open, and upsert watch position called every 10 seconds during playback.

12

Frontend Connection

The Flutter app uses Riverpod with a VideoRepository layer. The PlaybackController fetches signed URLs from NestJS and passes them to video_player. Watch position syncs every 10 seconds via a background timer.

13

Lessons Learned

Sign URLs server-side, always

An early prototype generated signed URLs client-side using temporary AWS credentials, exposing key material in the app bundle. Moving signing to NestJS meant credentials never leave the server.

Stripe webhooks need idempotency checks

Stripe can deliver the same event more than once. Our first handler processed duplicate payment_succeeded events and granted double subscription extensions. Idempotency checks on the Stripe event ID fixed it.

Pre-initialise video_player before the user taps play

Cold-start latency was 2-3 seconds. Pre-initialising the controller when the user navigates to the title detail page — before they tap play — reduced perceived latency to under 500ms.

HLS segment size affects offline storage significantly

Default 10-second segments wasted storage by downloading a full segment before pausing. Switching to 2-second segments reduced wasted storage by ~40%.

Start a project