Enterprise GPT Knowledge Assistant

Designed and deployed a secure document-aware chatbot with vector search, embeddings, and context-aware responses for internal knowledge workflows.

Year

2024

Category

LLM / Search

Stack

Django, OpenAI, Pinecone

Key Results

41%

Fewer Support Tickets

in first month

60%

Faster Onboarding

for new hires

1,200+

Weekly Queries

handled automatically

94%

Staff Satisfaction

from internal survey

01

The Challenge

A 200-person professional services firm needed employees to query internal knowledge — HR policies, project templates, client SOPs — without bothering senior staff or digging through SharePoint. Knowledge was siloed, new hires took weeks to ramp up, and senior staff fielded the same questions repeatedly.

02

What We Built

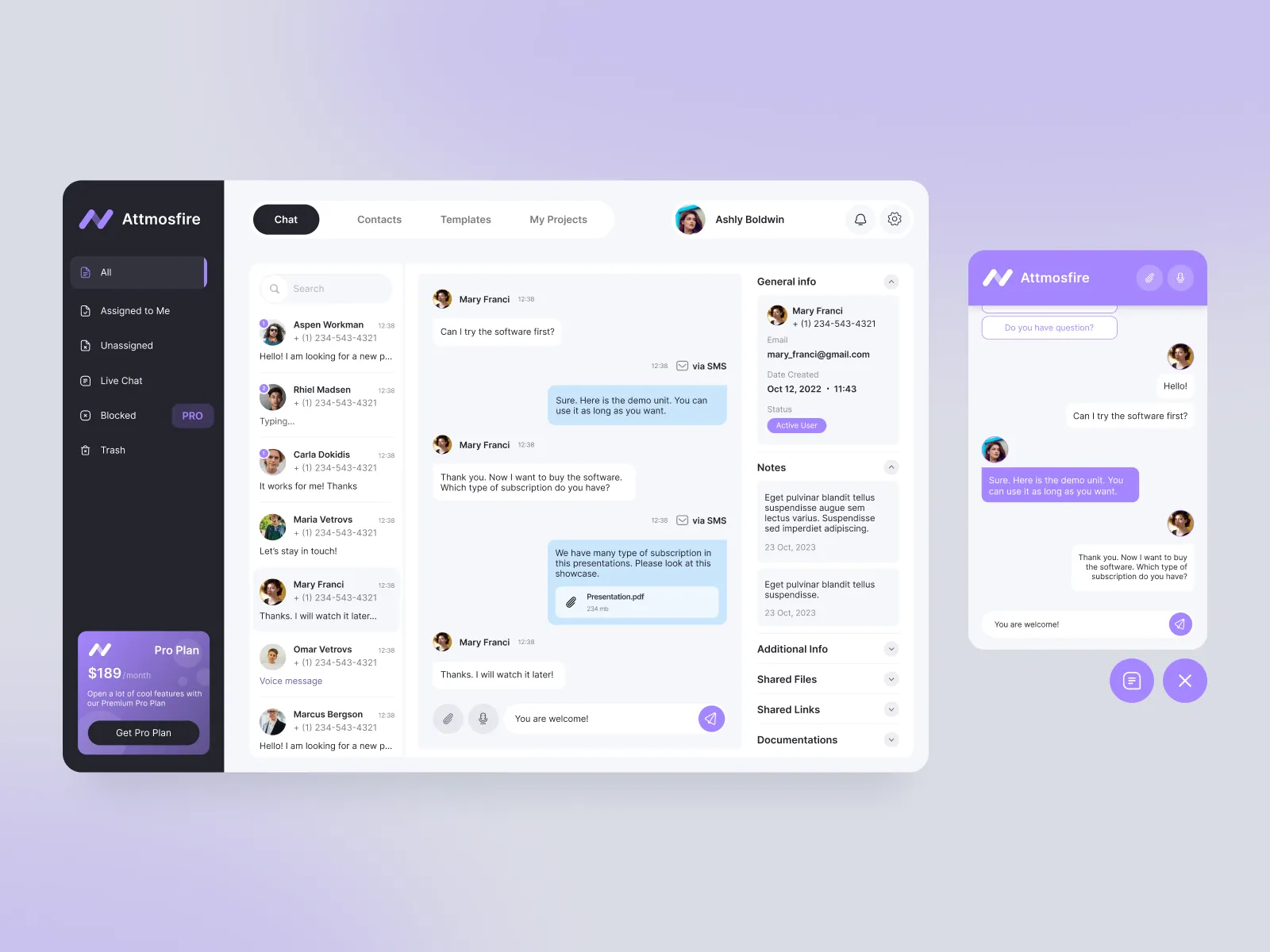

A secure internal chatbot with role-based document access. Django powers the API and auth layer, Pinecone handles vector storage with one namespace per permission role, and Auth0 provides SSO. The Next.js frontend streams responses token-by-token and surfaces citations inline.

03

Results

Internal support tickets dropped 41% in the first month. Onboarding shortened by an estimated 60%. The assistant handles over 1,200 queries per week with a 94% satisfaction rate. The firm rolled out to two partner offices within the first quarter.

04

Before & After

Before

Employees emailed HR or senior staff for every policy question — answers took hours or days

New hires spent weeks shadowing colleagues just to learn where information lived

Knowledge was siloed by department — no cross-team visibility into SOPs or templates

No audit trail — impossible to know if employees were getting accurate answers

After

Any employee gets an instant cited answer from the assistant in seconds

Onboarding cut by 60% — new hires query the assistant independently from day one

A single interface surfaces relevant knowledge across all permitted departments

Every response is grounded in source documents with traceable citations

05

How We Built It

We designed the permission model before touching AI. Getting access control right first meant we never had to retrofit security onto a working system.

Permission model & Auth0 setup

Designed the role-to-namespace mapping, configured Auth0 SSO, extended Django's user model, and validated that JWT claims could drive Pinecone namespace resolution end-to-end.

Document ingestion pipeline

Built Celery workers for chunking, OpenAI embedding, and role-scoped Pinecone upserts. Established the convention that Auth0 role names mirror Pinecone namespaces exactly.

Django query API with SSE streaming

Implemented the DRF query view, QueryService with multi-namespace retrieval and re-ranking, and the StreamingHttpResponse SSE pipeline.

Next.js chat interface

Built the useChatStream hook, streaming chat UI, citation chip rendering, and server-component conversation history loading. Deployed to Vercel.

06

System Architecture

A three-tier architecture with an AI retrieval layer between the API and LLM. Django owns business logic and permission enforcement. Pinecone owns vector search. Next.js owns the UI.

Auth Layer

Auth0 + Django

Auth0 handles SSO and issues JWTs. Django validates the token on every request and resolves the user's role and Pinecone namespaces before any query reaches Pinecone.

Document Ingestion

Django + Celery + OpenAI

Uploaded documents are queued in Celery, chunked into 400-token segments, embedded via OpenAI, and upserted into the role-scoped Pinecone namespace.

Vector Store

Pinecone

One namespace per permission role. Metadata on each vector stores document_id, chunk_index, and page. Queries filter by namespace before similarity scoring.

Query API

Django REST Framework

Receives a question, resolves the user's namespaces, queries Pinecone for top-k chunks, assembles the prompt, and streams the OpenAI response as SSE.

LLM Layer

OpenAI GPT-4o

Structured system prompt instructs the model to answer only from provided context and cite sources. Temperature 0 for deterministic responses.

Frontend

Next.js + TypeScript

Server components fetch conversation history. A client component connects to the Django SSE endpoint and renders tokens as they stream, with citation chips inline.

07

Tech Stack

Backend

AI / ML

Vector Store

Database

Auth

Frontend

08

How We Approached the Problem

The core constraint was access control — employees in different roles could not see each other's documents. We designed the permission model first. Every Pinecone vector carries metadata with the document's permission scope, and every query filters on that scope server-side.

Alternatives considered & rejected

Single shared vector namespace

A single namespace makes per-user filtering possible but expensive at scale. Namespace-per-role gave hard isolation and faster retrieval.

FastAPI instead of Django

The firm's user and permission tables were already in a Django app. DRF let us reuse ORM models, permission classes, and admin panel without rebuilding auth.

Vercel AI SDK for streaming

The Vercel AI SDK assumes a Next.js API route as the streaming origin. Our backend was Django, so we implemented SSE directly with StreamingHttpResponse.

09

Data Modelling

Django models own the relational side: users, roles, documents, conversations. Pinecone owns the vector side. The document model stores Pinecone vector IDs so we can delete or re-embed without a full index rebuild.

10

API Layer

Django REST Framework handles the query endpoint. The view resolves namespaces from the user's roles, delegates to QueryService which retrieves from Pinecone, and streams the OpenAI response via StreamingHttpResponse.

11

Database Functions

PostgreSQL functions handle conversation context fetching and bulk document status updates after ingestion.

12

Frontend Connection

A useChatStream hook manages the full lifecycle — sending a message, reading the SSE stream, appending tokens to the buffer, and handling errors. The component never touches fetch directly.

13

Lessons Learned

Namespace-per-role beats metadata filtering at scale

A single namespace with role metadata became the bottleneck at 500k+ vectors. Per-role namespaces dropped p95 query latency by 60% since each query scanned a fraction of the index.

Django ORM is a liability for bulk vector operations

Upserting thousands of embeddings through the ORM was slow — one INSERT per object. We bypassed it using psycopg2 execute_values and batched Pinecone upserts of 100 vectors.

Auth0 roles should mirror Pinecone namespaces exactly

A custom role-to-namespace mapping table was a bug source when roles were renamed. Enforcing the convention that Auth0 role name IS the namespace eliminated the mapping layer entirely.

Multi-turn context needs a hard token budget

Full conversation history hit the context window unexpectedly on long sessions. We cap at the last 10 messages and summarise older turns into a single system message.

Start a project